As you have probably read in other articles, I love data. I’m not ashamed. It’s a passion and I’m proud. From social science behavioral data to pure quantitative performance metrics; from big data to little data; from messy, dirty data to well-formed and beautifully curated data repositories. Come one, come all. I won’t discriminate, and I won’t judge.

Defining Data Analysis Tools

Let me get some of my personal definitions out there in the open. You don’t have to agree, but this is how I have come to categorize both the tools and the approaches to data they support. First, there is business intelligence. Primarily, this refers to transforming data into graphics and summary statistics to help business leaders (managers) make informed decisions. It’s straightforward and, well, not that complicated. A picture is worth a thousand words, some say, and that’s what makes business intelligence tools so popular. They are largely “plug and play” and turn tabular data into a dashboard.

If this were an evolutionary description, then the second stage would be the data analytics tools and platforms. These perform as self-described and help produce linear statistics from data sets. Whereas the business intelligence lot may be able to produce a regression line, these tools are focused on higher-level statistics, but still with regression models in mind. I think of forecasting/time series tools, or multivariate regressions and correlations, all helping to identify trends and outliers but still looking at relatively simple data to make some future predictions based on past performance. This is the “analysis” in data analytics, always looking to a fixed past set of data to draw linear inferences and describe patterns; the “normal” in the normal curve.

The third stage is focused on complex multidimensional data and is used to drive predictions of the future state or future existence of class members and is collectively known as machine learning or data science. These tools will do all of the foregoing, but mostly as a means to prepare data for the machine learning algorithms that power the predictions. They can extract data from anywhere (from matrices, key-value pairs, images, molecular structures, etc.), transform that data into the appropriate shape, and apply the learning algorithm, and often a broad suite of algorithmic tools to help build models.

Why KNIME Reigns Supreme

When it comes to working with data, there is no tool I love more than KNIME. It has a lot going for it. It’s open-source (think, “it’s free!”). The graphical user interface is easy to work with and makes it easy to explain to others what you have done. All of the workflows are reusable. There is easy support for other technologies I like to use, too, like the R language, Python, multiple SQL and NoSQL databases, and cloud-based data tools like Big Query.

To be honest, I am so spoiled now that the thought of ever doing data analysis in a spreadsheet gives me the heebie-jeebies. The hair on the back of my neck stands up, and I start to panic into a cold sweat. I feel an instant need to run. Those days were awful. When I think of all the time I wasted re-doing what I had already done a thousand times before…well, you just can’t get that back.

A Deep Dive Into KNIME

KNIME 4.4.1 is the current release as of this writing (September 2021). It contains a bunch of goodies, and the look and feel have been improved over the last iteration. They have continued with the “read from” and “write to” anywhere mentality and expanded those capabilities. This can get pretty technical but suffice it to say that KNIME handles small datasets and huge ones (like Hadoop clusters) beautifully. It also has nodes for RESTful data requests – and if you don’t know what that means, it is simply a way to pull data down from remote servers, including those out on the internet.

Recent additions to KNIME around so-called “big data” mean small machines can do big things. KNIME was always built with performance in mind, but the addition of some Spark nodes and parallel processing enhancements means data can get really big. I have personally chewed through hundreds of millions (100,000,000+) of lines of data on a laptop without much trouble. This can be helpful if you are doing some complex analysis and want to compare many features inside your data.

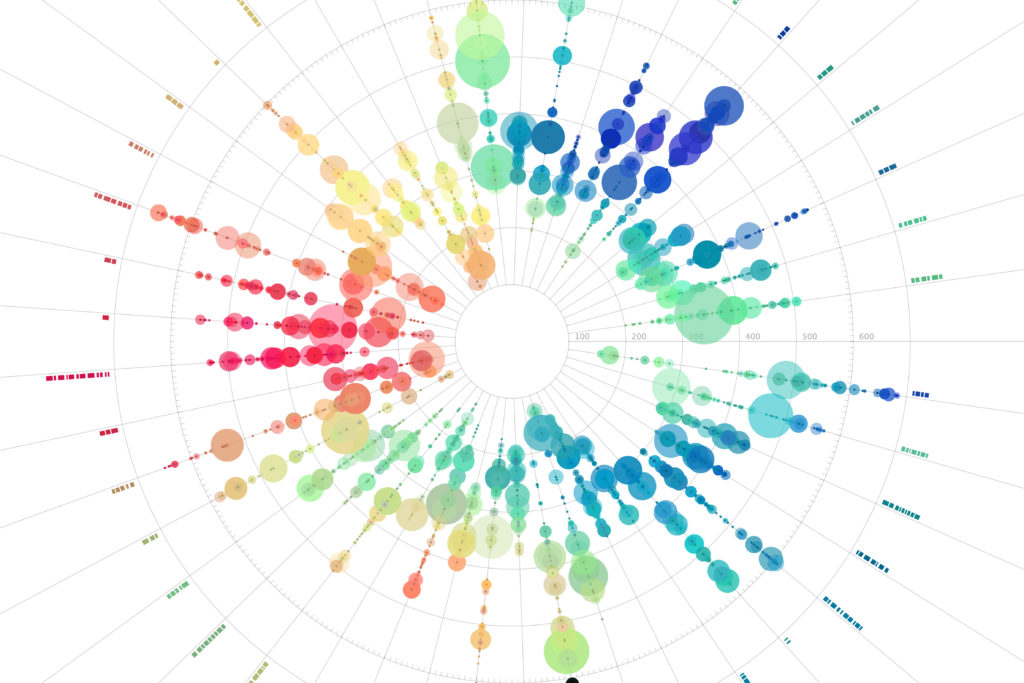

Mapping has always been a part of KNIME, too. However, these nodes have also been expanded and improved. There are nodes to read ESRI data files and nodes to draw beautiful maps with custom colors and features to represent data qualities. Simply put, you can use any data that contains latitude and longitude coordinates to create rich visuals. If you want to geek out (oh, me too!), you can use some popular libraries for mapping from tools like R to create super-rich geospatial analyses.

The analytics portion of KNIME is, of course, the fun stuff. Data transformation (cleaning up all that legacy data, melding disparate data sources, etc.) is robust – I haven’t run into anything yet I couldn’t do. But the analytics side is the fun stuff. There is a full suite of statistics tools. I mean full: regression, correlation, ANOVA…Cronbach Alpha, anyone? But the fun stuff is inside the machine learning suite. This is where you can start to build out models around similarity (is this property really like the others), distance (which entities are similar and which are different), or trends. There are nodes to guide you through time series analysis (looking at changing patterns over time). You can even finish off building some predictive models – analyses that can help you classify unknown entities into certain groups.

Final Perspective

The KNIME core team is wonderful to work with, both technically and from a business perspective. Their leadership is a solid group of intellectual creatives who are steeped in data science but remain approachable and genuine. There were, up until the pandemic, wonderful learning and community engagement events in Berlin and Austin annually. I always enjoyed them both and hope for their eventual return.

If you are into data or just getting started with a data initiative, give KNIME a try. If you have any questions, feel free to reach out. I am always happy to talk data, tools, and methods, and I am rarely offended if you want to cut me off when I ramble on for too long. My wife has trained me well in deciphering the facial expressions and nonverbals of those who have received far more than they wished for by asking what was to them just a simple question. I will, therefore, try to keep my comments brief, but it is hard to contain enthusiasm sometimes.